Project Description

Voice recognition systems can be used to address this situation, allowing users to call up information using spoken commands.

Speak+Seek is a system that seeks to do just that, allowing a move away from drop-down lists and form filling towards a less complicated, voice controlled human-computer interaction.

Technology Solution

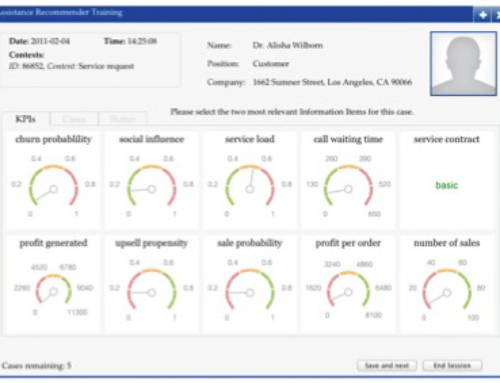

The Speak+Seek system has been developed as an Android smartphone app that can be used to query the data underlying an executive BI dashboard when a user is away from their desk. A typical executive BI dashboard is shown in Figure 1.

Figure 1. A typical executive dashboard (from Nathean’s Logix software)

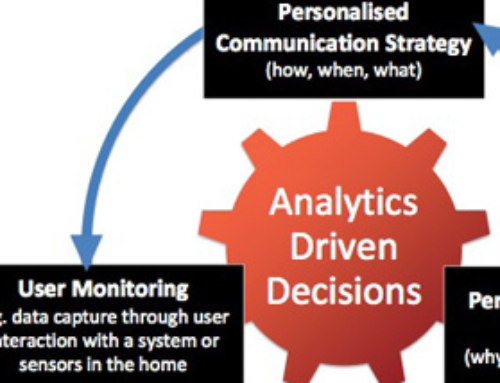

Figure 2. The key components of the Speak+Seek system

Figure 2 shows an overview of the Speak+Seek system. There are five key steps:

- Step 1: a user speaks a natural language query into their smartphone, requesting a piece of information from their BI dashboard

- Step 2: the smartphone speech recognition system activates, transforming the spoken words into text

- Step 3: the spoken text is converted from natural language into a machine-understandable query using a custom concept spotting parser algorithm

- Step 4: the machine-understandable query is used to extract the requested information from the data model underlying the dashboard

- Step 5: a natural language response is generated from the extracted information using a custom frame-based text generation engine

- Step 6: This natural language response is converted into speech and spoken back to the user (it is also written to the screen).

Figure 3. (a) The mobile app interface; (b) The speech recognition element; (c) The question (blue) and returned answer (red)

The Speak+Seek mobile interface is shown in Figure 3. A user presses the “Press Me!” button in Figure 3a. The user speaks then aloud their question. The speech recognition system interprets this utterance (Figure 3b), and the question is written to the screen in blue (Figure 3c). The system recovers the answer to the spoken question and prints it to the screen (in red). It also speaks the recovered answer back to the user.

Research Team

- Dr. Eoghan O’Shea, Dublin Institute of Technology

- Dr. Robert Ross, Dublin Institute of Technology

- Dr. Brian Mac Namee, Dublin Institute of Technology